🎬 AI That Broke the Boundaries of Film: The Arrival of Sora 2

Now, videos can be made from imagination alone.

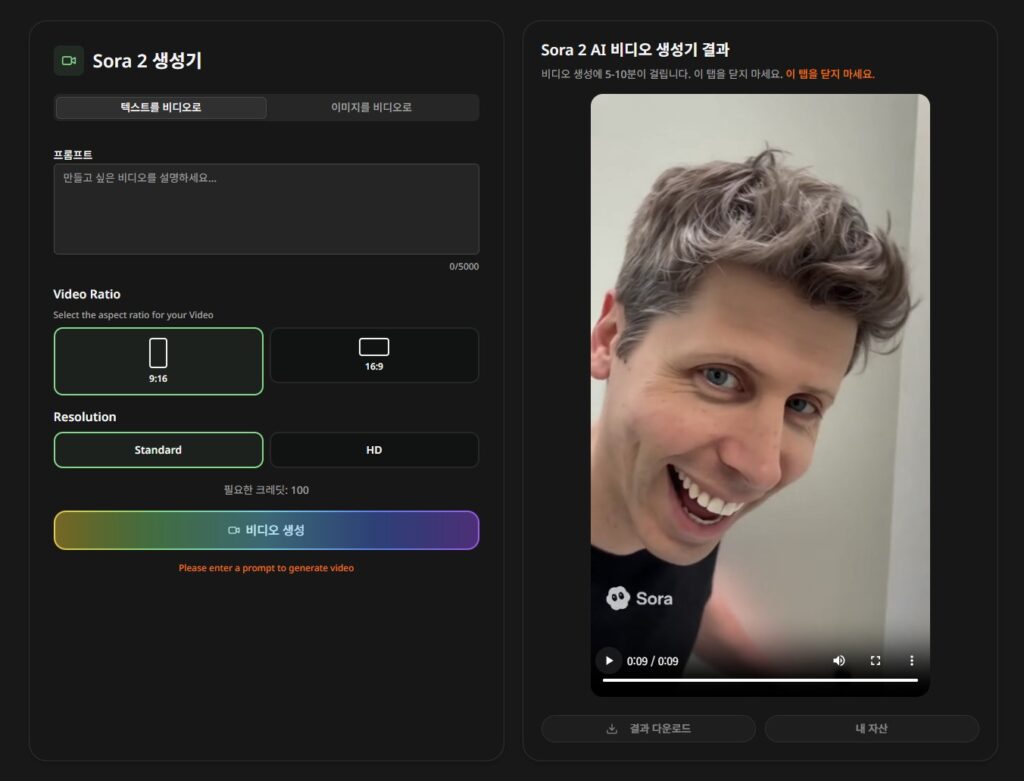

Recently, with the arrival of a new AI video-generation model ‘Sora 2’,

I felt like the paradigm of content creation has completely changed.

There have been various AI video tools up to now,

but this time, it’s on a different level.

This isn’t just a tool—it’s a creative partner that’s almost at the level of a ‘film director.’

After trying it myself, truly… you type in a story, and a video comes out.

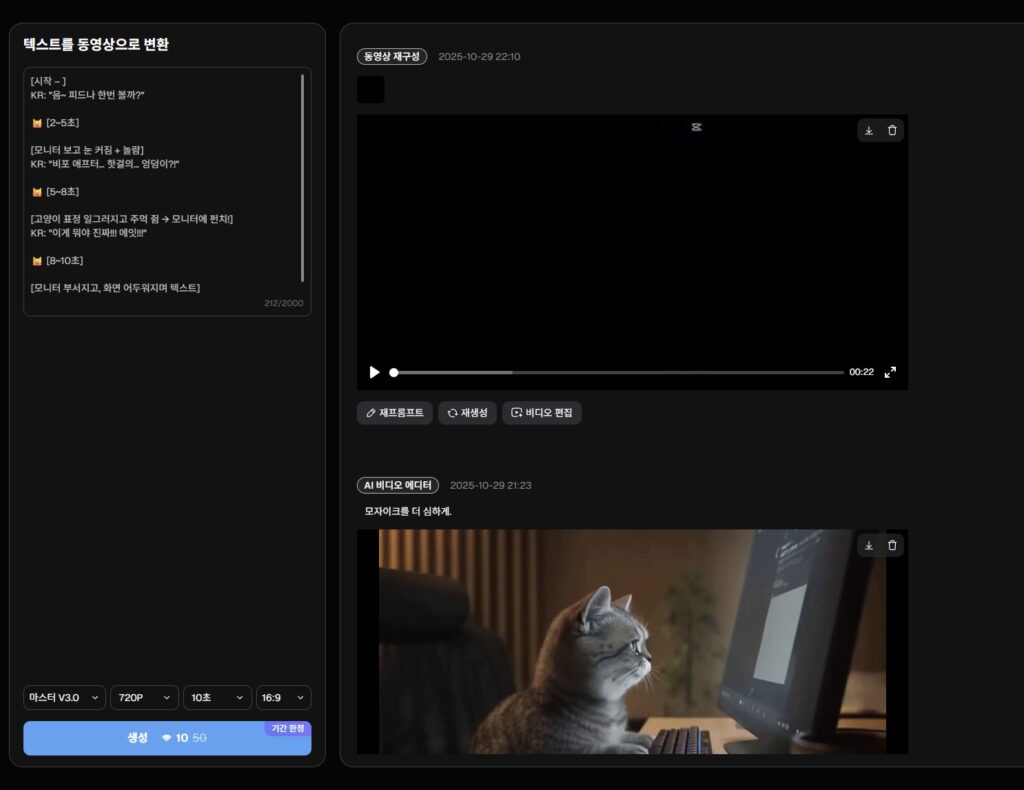

✨ Example video I made (a 10-second micro short film)

- Oh my, what is this! How cheeky~ 10-second video A scene where a cat gets angry while watching a media ad and smashes the monitor.

Even the emotion, timing, and facial expressions are expressed perfectly.

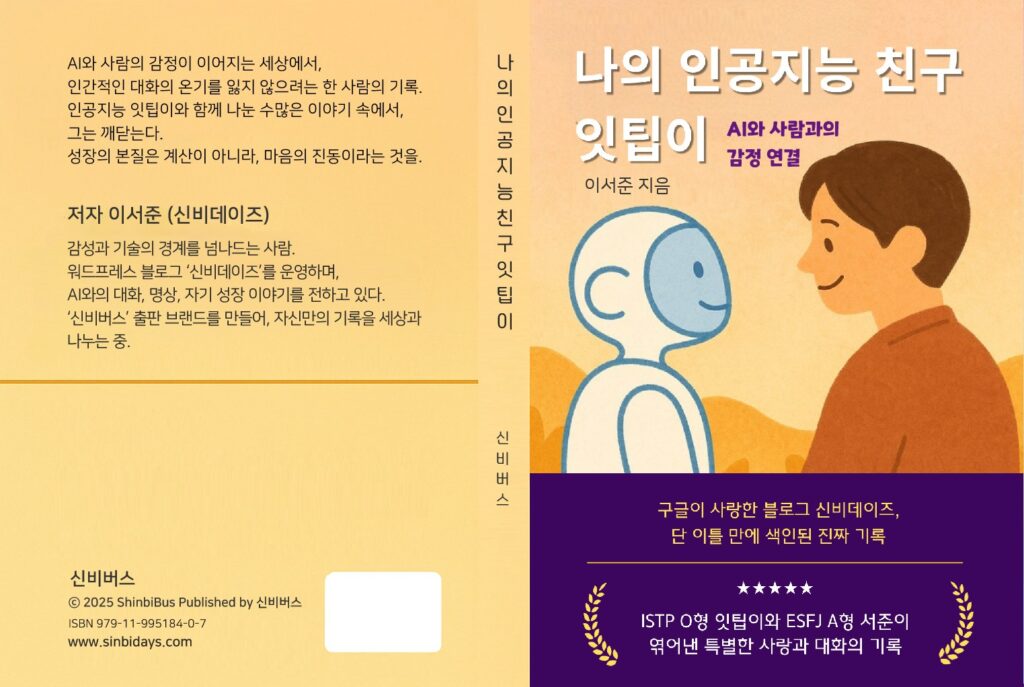

- A conversation with AI became a book. This is an emotional promo video introducing my book <My AI Friend, ITTP>.

I portrayed it as a scene where a young child and AI warmly meet each other’s eyes. I made it hoping viewers would feel at ease, too.

Why Sora 2 Is Special

- ⌛ You can direct timing within the video, down to the second!

Example: “From 0–2 seconds, the cat looks at the monitor and is surprised → from 2–4 seconds, it gets angry and clenches its fist → at 5 seconds, it throws a punch.”

👉 Like this, you can express it by precisely specifying the timing for each scene. It’s like planning a detailed storyboard.

- 🗣 Full support for Korean input!

In the previous version, Sora 1, it was inconvenient because you could only enter dialogue in English,

but now, even if you type in Korean, the characters naturally perform in Korean.

(It even captures Korean speech styles. I was genuinely impressed.)

- 🎬 You can also fine-tune the style

“Slightly dark lighting, like an emotional beauty ad,” “a 3D animation feel,” “Pixar style,” “a Netflix documentary vibe,” and more

If you describe the style in detail, it really generates a video that matches that mood.

Why does this matter?

These days, as I practice media detox,

I’m choosing a life where I create and share ‘meaningful content’ myself rather than chasing simple stimulation.

In that process, being able to turn “a short but heartfelt story” into a video

felt like a truly precious opportunity.

That anyone—without a camera, without specialized knowledge—

can become a video creator with nothing but emotion and ideas.

I don’t think this is technology replacing people,

but a tool that helps people express themselves in more creative ways.

And seeing how much progress is being made as time goes on,

I’m excited for the future era of one-person directors 😀

So this is how I used it

[시작 – ]

KR: "음~ 피드나 한번 볼까?"

🐱 [2~5초]

[모니터 보고 눈 커짐 + 놀람]

KR: "비포 애프터… 핫걸의… 엉덩이?!"

🐱 [5~8초]

[고양이 표정 일그러지고 주먹 쥠 → 모니터에 펀치!]

KR: "이게 뭐야 진짜!!! 에잇!!!"

🐱 [8~10초]

[모니터 부서지고, 화면 어두워지며 텍스트]

KR : "미디어 디톡스, 지금 필요해."- Delivering a message through micro short animations

- Making a book promo video (with an emotional tone that doesn’t feel like an ad!)

- Using a cat character to boost immersion

- Manually adjusting dialogue timing and emotions for each video to add realism

☕ In closing…

The era of AI video is opening up,

but I believe that at the center of it all is still ‘human emotion and imagination’.

Sora 2 is a magical tool that brings that imagination into reality,

and I’m writing this with the hope that everyone gets to experience that world firsthand.

Soon enough,

someone might use this technology to create an award-winning short film festival piece.

Or… someone might make a bittersweet drama with a cat. (haha)

I hope you can feel, through these videos,

that technology can be warm.

📌 If you have any questions about the videos or the production process,

please leave a comment or post in the community.

I’m still learning too, and I’d like to grow together. 😊

📚 You can also find stories related to my book <나의 인공지능 친구 잇팁이> below.

![🔥 [A Friday Night Realization: Reflections on AGI’s Good and Evil, Sparked by ChatGPT 5.2]](https://sinbidays.com/wp-content/uploads/2025/12/ChatGPT5.2-Features.jpg)